📖 The Context: "Life has a price"

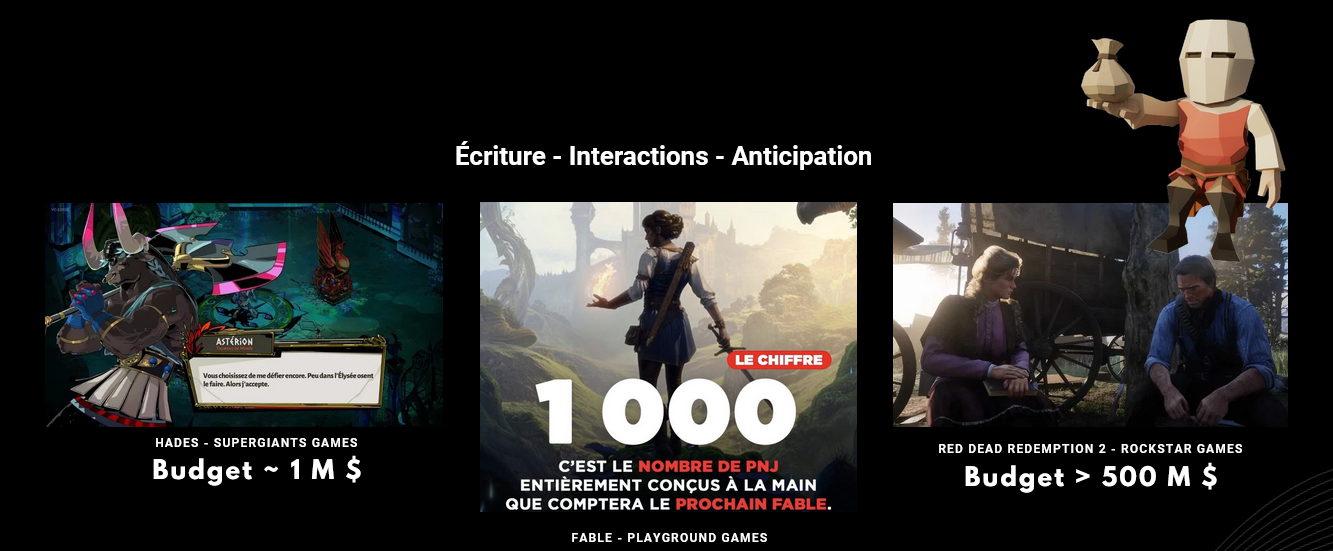

In the video game industry, creating believable and lifelike NPCs (Non-Playable Characters) is extremely expensive. Juggernauts like Red Dead Redemption 2 or Hades spend millions to achieve this level of realism and immersion. Unfortunately, this is a luxury often out of reach for indie studios or smaller teams.

NPCForge was born from this realization. Our goal: to offer independent developers an affordable and accessible way to bring their virtual worlds to life with NPCs equipped with infinite dialogue and persistent memory, without blowing their budgets. The idea is to draw inspiration from groundbreaking research projects like Voyager or Stanford Smallville and apply it concretely to video game development.

🐺 The Practical Case: A 100% AI Werewolf Game

To test and challenge our solution in real conditions, the team developed a demo game: a game of Werewolf where the player is merely a spectator. Roles are assigned to AIs, who then discuss among themselves, argue, and vote until the end of the game. This highly visual demonstration showcases the API's capabilities to manage multiple complex conversations.

🎯 My Role: Project Manager & Plugin Dev

NPCForge was born from the collaboration of 4 developers (Mathieu Rio, Tristan Bros, Hugo Fontaine, and myself) spread across the globe (South Korea, Sweden, Germany). I wore two major hats on this project:

1. Project Manager

This was my first management experience on such a free-form project, with significant geographic distance. My challenge was to coordinate 3 distinct divisions (API, Plugin, Demo Game) while enforcing a Scrum methodology. I had the difficult task of legitimately establishing myself as the overall decision-maker, a position I maintained by making structural decisions while leaving total autonomy to each leader regarding the implementation of their part.

2. Lead Developer on the UE5 Plugin

I chose to handle the Unreal Engine plugin because it was the central piece of the system. Having one foot in the game engine (where the visual magic happens) and the other in the Golang API allowed me to have a complete vision of the project and guide the teams effectively. To streamline the work and meet deadlines, I had to constantly juggle between C++, Blueprint, and Go.

🧠 Under the Hood: Architecture & Technical Challenges

The core of the system relies on a clear separation of responsibilities between the game engine (native C++ plugin under Unreal Engine 5) and a centralized application server.

⚙️ Golang at the Core of the System: The Conductor

Developed in Go, the backend acts as the true centralizing brain of the infrastructure. This technological choice was motivated by the need for high performance and robust concurrency management (via goroutines). Its role is twofold:

- Simultaneously coordinate multiple game instances, while managing persistence (PostgreSQL) and the continuous evolution of the AIs' memory.

- Serve as a modular gateway to the OpenAI API, strategically chosen for its excellent quality/price/speed ratio. This centralized architecture ensures that the language model (LLM) can easily be replaced or updated in the future without impacting the critical game-side code.

🚧 The Technical Challenge: The Bottleneck

In our early iterations, communication between the game and the server was completely centralized via a single WebSocket tunnel. Since our NPCs were designed to be proactive, they constantly pinged the server to evaluate their environment and propose interactions.

This model quickly created a critical bottleneck: the call stack became congested, causing unacceptable latency for a real-time application and shattering any illusion of fluidity for the players.

💡 The Solution: "Asynchronous Brains" and Concurrency

To break this software lock, we completely rethought the communication logic by fully exploiting the power of Golang:

- Cognitive Emancipation via Goroutines: Rather than sequential centralized processing, each AI is now attached to an independent "asynchronous brain" on the server side. Deployed via goroutines, these brains communicate in parallel and asynchronously with the external API, virtually vaporizing the global queue.

- Cognitive Culling and Dynamic Optimization: Blindly parallelizing calls risked saturating API quotas and incurring astronomical costs. To remedy this, we implemented an intelligent optimization loop with Unreal Engine: the game engine now actively controls the "thinking" state of the AIs. If an NPC is too far from the player or has no potential interaction, its cognitive process is targetedly suspended by the server (the culling principle). This synergy ensures excellent overall performance while maintaining acceptable infrastructure costs.

🔭 The Vision and What's Next

Although the AIs in our demo are limited to discussion, our long-term vision is much broader. The next major step will aim to allow NPCs to move and actively interact with their environment.

Future projects include:

- Stabilizing AI "hallucinations" for more predictable behavior.

- Abstracting the API to make it more generic and accommodate a wider variety of game types.

- Creating simplified user interfaces to facilitate integration on the developer side.

- Potential ports to other game engines on the market.